One tree

One tree One life

USA

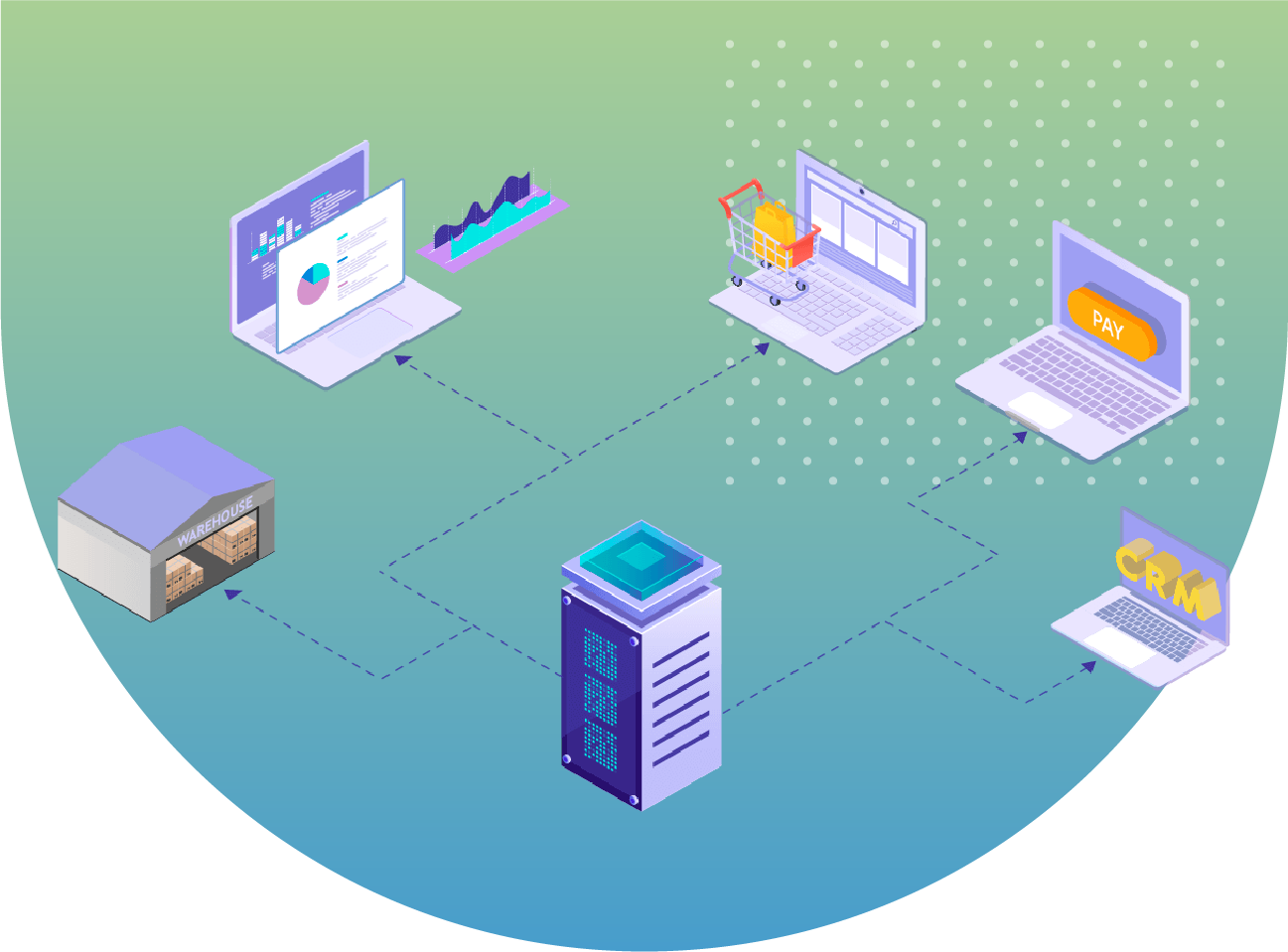

SQL Server, Oracle, Informatica, Rabbit MQ

Sales & Distribution

Business Problem With the increased volume of product data changes due to ever-changing business needs and the requirement of multiple test environment creation driven by agile adoption, it becomes significantly difficult to support the delivery of product changes on a daily basis.

The majority of data management teams’ time is occupied in supporting product data distribution and there is little focus on innovation.

The end result is that the product data distribution and deployment process becomes a bottleneck – impacting business agility and competitiveness.

Technical Problem Existing data distribution process was an age-old process and had several drawbacks:

Product data distribution and deployment only happen once a day. Any subsequent change to product data requires 24-hrs window to reflect in all the systems.

With an increase in data volume over a period of time, systems often face stability issues due to high load.

It typically takes 5-6 hours to complete data distribution and deployment across all downstream systems – impacting e2e integration testing.

There are many manual touch points that require coordination among multiple dev and testing teams.

As part of devops journey, teams decided to work closely on improving the process with an aim to achieve the following design goals:

Multiple, smaller, on-demand data deployments as against one large scheduled deployment

Delta refresh as against full refresh

Automate Data deployment with the one-click process as against manual touch-points

Near-zero downtime in test environments

We have already assisted enterprises belonging to various industry verticals in implementing DevOps practices. Get in touch with our Experts today.

Our detailed and accurate research , analysis, and refinement leads to a comprehensive study that describes the requirements, functions, and roles in a transparent manner.

We have a team of creative design experts who are apt at producing sleek designs of the system components with modernized layouts.

Our programmers are well versed with latest programming languages, tools, and techniques to effectively interpret the analysis and design into code.

Quality is at the helm of our projects. We leave no stone unturned in ensuring superior excellence and assurance in all our solutions and services.

We have a well-defined, robust, and secure launch criteria that offers us a successful implementation clubbed with detailed testing, customer acceptance and satisfaction.

There were several architectural challenges to meet the above design goals. Out-of-the-box thinking and use of open source tools allowed teams to meet stated objectives. Some of the key architecture changes are:

Download & Delta Refresh: In the existing process, all systems refresh local copy of data completely irrespective of the amount of change. With the new design, an intermediate layer introduced that enables all systems to have only delta refresh. This greatly helped to reduce time to download data from the source and refresh in the local system.

Synchronize Data Refresh: With multiple systems involved – each having its own data storage, format and ETL process, the major question was ‘how to synchronize data refresh across all impacted systems?‘ Considering each system taking its own time to refresh data, if one system completes refreshing while other is in progress causes the challenge of systems going out of sync.

To solve this problem, the notification process is implemented using an open source messaging broker; RabbitMQ. Every time there is a change in product data, a notification message is sent to each of the downstream system using RabbitMQ so each consumer can react to the event.

Zero downtime: To ensure that any data deployment does not impact ongoing testing, all systems initially deploy data in an offline database and upon confirmation of latest data available in all systems, a notification is sent to each system to flip from offline to online db.

“SPEC House”, Parth Complex, Near Swastik Cross Roads, Navarangpura, Ahmedabad 380009, INDIA.

“SPEC Partner”, 350 Grove Street, Bridgewater, NJ 08807, United States.

This website uses cookies to ensure you get the best experience on our website. Learn more